I Love Debugger

Learning to Love Bugs

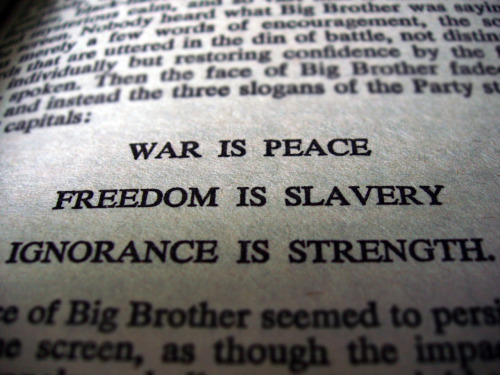

My apologies for the titular pun in reference to “I Love Big Brother” of iconic, Orwellian fame, but I couldn’t resist. The other day, I was chatting with some people about the idea of factoring large methods into smaller, more focused ones and one of the people chimed in with an objection that was genuinely new to me.

My apologies for the titular pun in reference to “I Love Big Brother” of iconic, Orwellian fame, but I couldn’t resist. The other day, I was chatting with some people about the idea of factoring large methods into smaller, more focused ones and one of the people chimed in with an objection that was genuinely new to me.

Specifically, the objection was that giant methods tended to be preferable because it kept the stack trace flat and made it easier to have everything “all in one place” when you were (inevitably) going through the code in the debugger. My first, fleeting thought was to wonder if people really found it that difficult to ctrl-tab between classes, but I quickly realized that this was hardly the important problem here (and really, to each his or her own). The bigger problem, as I explained a moment later, but have thought through in a bit more detail for a blog post now, is that you’re writing code more likely to generate defects so that when you’re tasked with fixing those defects, you feel more comfortable.

This is like a general housing contractor saying, “I prefer to use sand as a building material over wood or brick for houses I build because it’s much easier to work with in the morning after the tide destroys the house each night.”

Winston realized that two equals four and that the only way to prevent bugs is to cause them. Wilson happily declared, “I love Debugger!”.

More Bugs? Prove It!

So, if you’re a connoisseur of strict logic in debating, you’ll notice that I’ve begged the question here with my objection. That is, I ‘proved’ the reasoning fallacious by assuming that larger methods means more bugs and then used that ‘proof’ as evidence that larger methods should be avoided. Well, fear not. A group of researches from Standford did an empirical analysis of OS bugs, and found:

Figure 5 shows that as functions grow bigger, error rates increase for most checkers. For the Null checker, the largest quartile of functions had an average error rate almost twice as high as the smallest quartile, and for the Block checker the error rate wEis about six times higher for larger functions. Function size is often used as a measure of code complexity, so these results confirm our intuition that more complex code is more error-prone.

Some of our most memorable experiences examining error reports were in large, highly complex functions with contorted control flow. The higher error rate for large functions makes a case for decomposition into smaller, more understandable functions.

This finding is not unique, though it nicely captures the issue. During my time in graduate school in a class on advanced topics in software engineering, we did a unit on the relationship between various coding practices and likelihood of bugs. A consistent theme is that as function size grows, number of defects per line of code grows (in other words, the number of defects per function grows faster than the number of lines per function).

So, What Now?

In the end, my response is quite simply this: get used to a more factored and distributed paradigm. Don’t worry about being lost in files and stack traces in the debugger. Why not? Well, because if you follow Uncle Bob Martin’s advice about factoring methods to be 4 or 5 lines, you wind up with methods that descriptively tell you what they’re going to do and do it perfectly. In other words, you don’t need to step into them because they’re too simple and concise for things to go wrong.

In this fashion, your debugging becomes different. You don’t have a pen and paper, a spreadsheet, a stack trace window, and a row after row of “immediates” all to keep track of what on Earth is going on. You set a breakpoint somewhere, and any method calls are innocent until proven guilty. You step over everything until something fishy happens (or until you become a client of some lumbering beast of a method that someone else wrote, which is virtually assured of having defects). This approach is almost universally rejected at first but infectious with time. I know that, as a “no bigger than the screen” guy originally, my initial reaction to the idea of all methods being 4 or 5 lines was “that’s stupid”. But try it sometime and you won’t go back.

Bye, Bye Debugger!

If you combine small factored methods and unit tests (which tend to have a natural synergy), you will find that your debugger skills begin to atrophy. Rather than reasoning about the code at runtime, you reason about it at compile time. And, that’s a powerful and important concept.

If you combine small factored methods and unit tests (which tend to have a natural synergy), you will find that your debugger skills begin to atrophy. Rather than reasoning about the code at runtime, you reason about it at compile time. And, that’s a powerful and important concept.

Reasoning about code at run time is programming by coincidence, as made famous by one of my favorite programming books. I mean, think about it — if you need the debugger to understand what the state of the code is and what’s going on, what you’re really saying when you build and run is, “I have no idea what this code is going to do by inspecting it, so I need to run the entire application to understand it.” You don’t understand your own code while you’re writing it. That’s a problem!

When you write small, factored methods and generally tested and decoupled code, you don’t have this problem. Take this to its logical conclusion and imagine a method that takes two int parameters and returns an int representing their sum. Do you need to set breakpoints and watches, tag immediate variables and look at a stack trace to know what this method will do? Of course not! You can reason about this method at compile time and take for granted that it will do the right thing at run time. When you write code like this, you’re telling the application how to behave. When you find yourself immersed in the debugger for three quarters of your day, you’re not dictating how the application will behave. Instead, you’re begging it to work as a kind of prayer since it’s pretty much out of your hands what’s going to happen. Don’t buy it? How many times have you been at your desk with a deadline looming saying “please, just work — why won’t you just work!?!”

This isn’t to say that I never use the debugger. But, with a combination of TDD, a continuous testing tool, and small, factored methods, it’s fairly rare. Generally, I just use it when my stuff is integrated with code not done this way. For my own stuff, if I ever do use it, it’s from the entry point of a unit test and not the application.

The cleaner the code that I write, the more my debugger skills atrophy. I watch in amazement at peers that are incredible with the debugger — and I say that with no irony. Some of them can get it to do things I didn’t realize were possible and that I freely admit are very cool. I don’t know how to do these things because I’m out of practice. But, I consider that good. If you’re getting a lot of practice de-bug-ing your code, it means you’re getting a lot of practice writing code with bugs in it.

So, let’s keep those methods small and get out of the practice of generating bugs.

(By the way, I’m going to be traveling overseas for the next couple of weeks, so this may be my last post for a while).

[…] downside, in fact. Programmers who are introduced to software development in this fashion learn to love debugger. They’re going to learn programming by coincidence and become very proficient at tweaking […]

“Bye, Bye Debugger!” That’s not just bold, it’s stupid. Like “bye bye, oscilloscope” by an EE guy. If just clean code makes you not need a debugger, your programs probably don’t do anything particularly interesting. There is enough opportunity for bugs besides foul program structure. And even if you think you have a crystal clear structure, you may be wrong. Having said that, I agree on keeping things short and tidy. But again, setting some arbitrary number of lines as a maximum function length to make things good seems silly and besides the point, too. Sometimes there just is a… Read more »